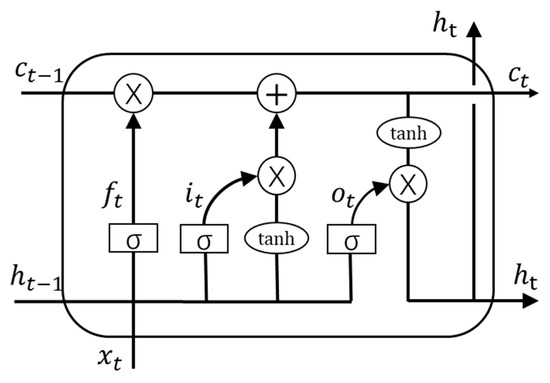

W_f represents the weight matrix associated with the forget gate.If for a particular cell state the output is 0, the piece of information is forgotten and for output 1, the information is retained for future use. The equation for the forget gate is: The resultant is passed through an activation function which gives a binary output. Two inputs x_t (input at the particular time) and h_t-1 (previous cell output) are fed to the gate and multiplied with weight matrices followed by the addition of bias. import numpy as np from keras.models import Sequential from keras.layers import LSTM, Dense numclasses len (np.unique (data 'sentiment')) modellstm Sequential () modellstm.add (LSTM (64, inputshape (10, 1))) modellstm.add (Dense (32, activation'relu')) modellstm.add (Dense (numclasses, activation'softmax')) pile (l. Forget Gate: The information that is no longer useful in the cell state is removed with the forget gate. Information is retained by the cells and the memory manipulations are done by the gates. ISRO CS Syllabus for Scientist/Engineer Exam.ISRO CS Original Papers and Official Keys.GATE CS Original Papers and Official Keys.DevOps Engineering - Planning to Production.Python Backend Development with Django(Live).Android App Development with Kotlin(Live).Full Stack Development with React & Node JS(Live).Java Programming - Beginner to Advanced.Data Structure & Algorithm-Self Paced(C++/JAVA).Data Structures & Algorithms in JavaScript.Data Structure & Algorithm Classes (Live).Plt.legend(,loc='center left', bbox_to_anchor=(1, 0. Plt.ylabel('(normalized) price of EURUSD') Plt.plot(np.arange(split_pt,split_pt + len(test_predict),1), test_predict,color ='r') Plt.plot(np.arange(window_size,split_pt,1),train_predict,color = 'b') Split_pt = train_test_split + window_size Print('testing error = ' + str(testing_error))

Testing_error = model.evaluate(X_test, y_test, verbose=0) Print('training error = ' + str(training_error)) Training_error = model.evaluate(X_train, y_train, verbose=0) Model.fit(X_train, y_train, epochs=500, batch_size=64, verbose=1) pile(loss='mean_squared_error', optimizer=optimizer) It is a special type of Recurrent Neural Network which is capable of handling the vanishing gradient problem faced by RNN. Model.add(LSTM(5, input_shape=(window_size, 1))) Long Short-Term Memory Networks is a deep learning, sequential neural network that allows information to persist. #Build an RNN to perform regression on our time series input/output data X_test = np.asarray(np.reshape(X_test, (X_test.shape, window_size, 1))) X_train = np.asarray(np.reshape(X_train, (X_train.shape, window_size, 1))) # NOTE: to use keras's RNN LSTM module our input must be reshaped Train_test_split = int(np.ceil(2*len(datay)/float(3))) # set the split point Scaler = MinMaxScaler(feature_range=(0,1))ĭef window_transform_series(series,window_size):įor i in range(window_size, len(series)):ĭataX,datay = window_transform_series(series = df, window_size = window_size) pd.read_csv)įrom import Dense, Activation, Dropoutįrom sklearn.cross_validation import train_test_splitįrom sklearn.preprocessing import MinMaxScalerįrom trics import mean_squared_errorįrom sklearn.model_selection import StratifiedKFoldĭf.columns = ĭf=df.str.replace('.','-') Import pandas as pd # data processing, CSV file I/O (e.g. I cannot really resolve what the model likes as input data and shape, to extrapolate the data from the last value of the 30th of January.Ĭan someone give me a hint or explain what the function model.predict() needs as input values? Now I would like to extrapolate the prices for the 31th of January. In my dataset I took the data for one month (January 2017) from 1st to 30th as training and testing dataset (1920 values). Now after setting up and train the model, I would like to predict, extrapolate the typical_price for one future day. I have built up an LSTM Seuqential Model for Forex M15 Values, specifically for the pair EURUSD, with typical_price as the price type.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed